This is a blog post that I’ve had at the back of my mind for a good 6 months or so. The pieces of the puzzle have come together after the Gestalt IT Tech Field Day event in Boston. After spending the best part of a week with some very very clever virtualisation pro’s I think I’ve managed to marshal the ideas that have been trying to make the cerebral cortex to wordpress migration for some time !

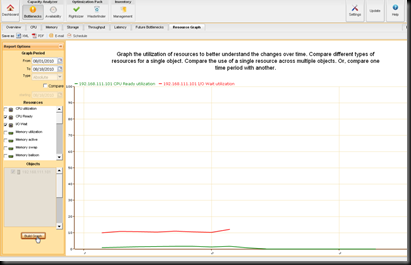

Managing an environment , be it physical or virtual for capacity & performance requires tools that can provide you with a view along the timeline. Often the key difference between dedicated “capacity management” offerings and performance management tools is the very scale of that timeline.

Short Term : Performance & Availability

There we are looking at timings within a few seconds / minutes ( or less ) this is where a toolset is going to be focused for current performance on any particular metric , be it the response time to load a web application , Utilisation of a processor core or command operations rate on a disk array. The tools that are best placed to give us that information need to be capable of processing a large volume of data very quickly due to the requirement to pull in a given metric on a very frequent interval. The more frequently you can sample the data , the better quality output the tool can give. This can present a problem in large scale deployments due to a requirement that many tools have to write this data out to a table in a database – this potentially tethers the performance of a monitoring tool to the underlying storage available for that tools , which of course can be increased but sometimes at quite a significant cost. As a result you many want to scope the use of such tools only to the workloads that require that short term , high resolution monitoring. In a production environment with a known baseline workload , tools that use a dynamic threshold / profile for alerting on a metric can be very useful here ( for example Xangati or vCenter Operations ) If you don’t have a workload that can be suitably base lined ( and note that the baseline can vary on your business cycle , so may well take 12 months to establish ! ) then the dynamic thresholds are not of as much use.

Availability tools have less of a reliance on a high performance data layer as they are essentially storing a single bit of data on a given metric. This means the toolset can scale pretty well. The key part of availability monitoring is the visualisation and reporting layer. There is no point only displaying that data to a beautiful and elegant dashboard if no-one is there to see that dashboard ( and according to the Zen theory of network operations , would it change if there was no one there to watch it ! ) The data needs to be fed into a system that best allow an action to be made – even if it’s an SMS / Page to someone who is asleep. In this kind of case , having suitable thresholds are important – you don’t want to be setting fire alarms off for a blip in a system that does not affect the end service. Know the dependencies on the service and try to ensure that the root cause alert is the first one sent out. You do need to know that the router that affects 10,000 websites is out long before you have alerts for those individual websites.

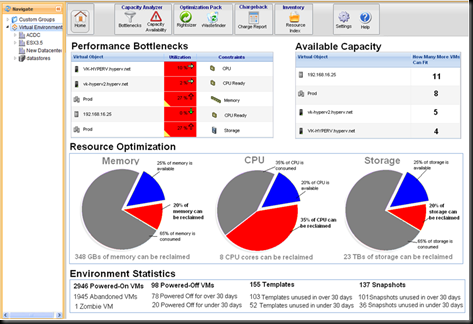

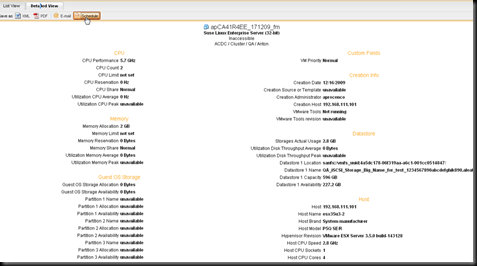

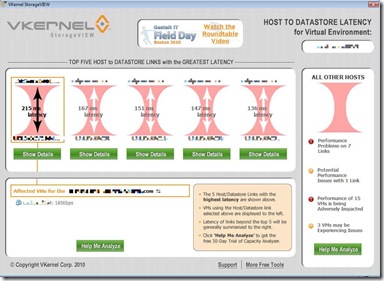

Medium Term : Trending & Optimisation

Where the timeline goes beyond “what’s wrong now” , you can start to look at what’s going to go wrong soon. This is edge of the crystal ball stuff , where predictions are looking to be made in the order of days / weeks. Based on collected utilisation data in a given period , we can assess if we have sufficient capacity to be able to provide an acceptable service level in the near future. At this stage , adjustments can be made to the infrastructure in the form of resource balancing ( by storage or traditional load ) – tweaks can also be made to virtual machine configuration to “rightsize” an environment. By using these techniques it is possible to reclaim over allocated space and delay potential hardware expansions. This is especially valid where there may be a long lead time on a hardware order. The types of recommendations generated by the capacity optimisation components of VKernel , NetApp ( Akorri ) and Solarwinds products are great examples of rightsizing calculations. As the environment scales up , not only are we looking for optimisations , but potential automated remediation ( within the bounds of a change controlled environment ) would save time and therefore money.

Long Term capacity analysis : When do we need to migrate Data centers ?

Trying to predict what is going to happen to an IT infrastructure in the long term is a little like trying to predict the weather in 5 years time , you know roughly what might happen but you don’t really know when. Taking a tangent away from the technology side of things , this is where the IT strategy comes in – knowing what applications are likely to come into the pipeline. Without this knowledge you can only guess how much capacity you will need in the long term. The process can be bidirectional though , with the information from a capacity management function being fed back into the wider picture for architectural strategy for example should a lack of physical space be discovered , this may combine with a strategy to refresh existing servers with blades. Larger Enterprises will often deploy dedicated capacity management software to do this ( for example Metron’s Athene product which will model capacity for not only the virtual but the physical environment ) Long term trending is a key part of a capacity management strategy but this will need to be blended with a solution to allow environmental modeling and what if scenarios. Within the virtual environment the scheduled modeling feature of VKernel’s vOperations Suite is possibly the best example of this that I’ve come across so far – all that is missing is an API to link to any particular enterprise architecture applications. When planning for growth not only must the growth of the application set be considered but the expansion in the management framework around it , including but not limited to backup and the short-medium term monitoring solutions. Unless you are consuming your it infrastructure as a service , you will not be able to get away with a suite that only looks at the Virtual Piece of the puzzle – Power / Cooling & Available space need to be considered – look far enough into the future and you may want to look at some new premises !

We’re going to need a bigger house to fit the one pane of glass into…

“one pane of glass” – is a phrase I hear very often but not something I’ve really seen so far. Given the many facets of a management solution I have touched on above , that single pane of glass is going to need to display a lot ! So many metrics and visualisations to put together , you’d have a very cluttered single pane. Consolidating data from many systems into a mash-up portal is about the best that can occur , but yet there isn’t a single framework to date that can really tick all the boxes. Given the lack of a “savior” product you may feel disheartened , but have faith!. As the ecosystem begins to realise that no single vendor can give you everything and that an integrated management platform that can not only display consolidated data , but act as a databus to facilitate sharing between those discrete facets is very high on the enterprise wishlist , we may see something yet.

I’d like to leave you with some of the inspiration for this post – as seen on a recent “Demotivational Poster” –a quick reminder of perfection being in the eye of the beholder.

LinkedIn

LinkedIn Twitter

Twitter